On Tuesday, at the Unite Berlin developers conference, Unity unveiled new tools designed specifically for augmented reality that could literally raise the technology to the next level.

Coming this fall, a new extension for Unity called Project MARS (short for Mixed and Augmented Reality Studio) will enable developers to create robust AR apps "that live in and react to the real world," according to Timoni West, director of XR research at Unity Labs.

"Our motto for this project: Reality is our build target. In other words, we don't want you to just think about building to a device or building to a console," said West during the AR portion of the event's keynote presentation. "We want you to think about how you can make apps that actually live in the real world. Apps that work the way we want augmented reality to act — context aware, flexible, customizable, works in the space."

Project MARS is designed for developers to create AR experiences without customized coding. For example, the extension gives developers to a new set of tools to draw AR-specific spatial parameters, such as proximity, plane size, and distance relationships, instead of coding them. There's also a new object type suited for AR development.

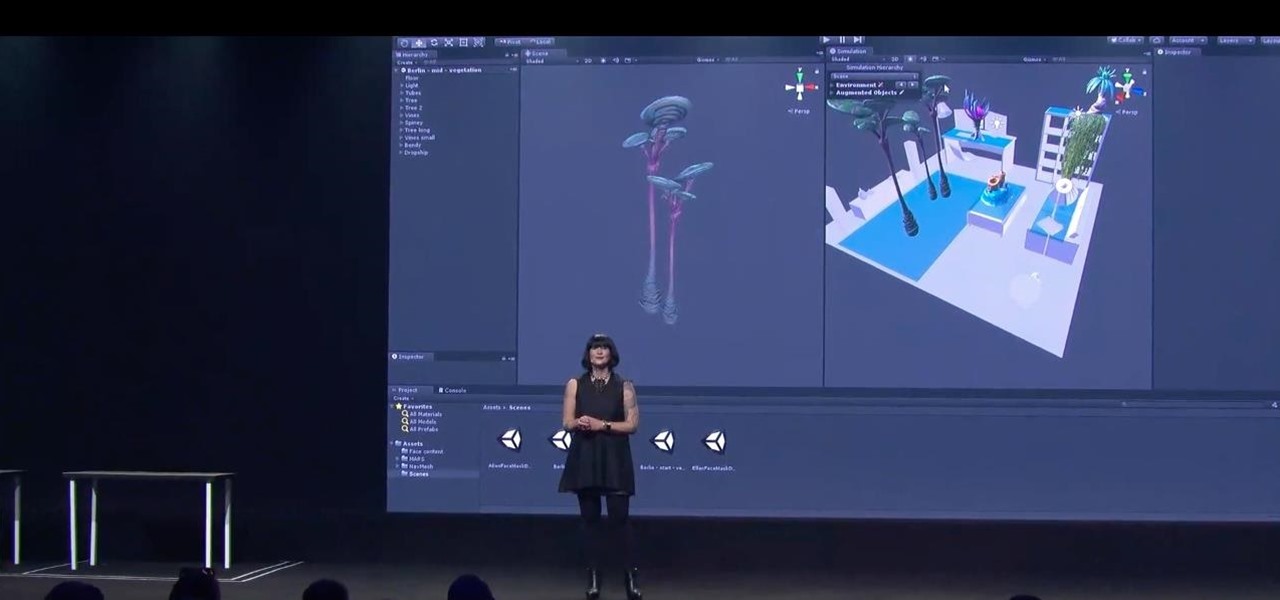

The extension also brings several other improvements via its Simulation View feature. For AR face masks, Simulation View lets developers see how effects work in real time through a connected camera. The Simulation View also includes several room templates to simulate how AR content would react in a real room with variable obstacles. For instance, content may appear differently on a bed in a bedroom versus a couch in a living room, and thereby require adjusted conditions. The Simulation View also works with third-party extensions.

"Before MARS, it would be almost impossible to set-up even these relatively simple set of conditions without coding," said West. "With MARS, you can now create complicated, multi-plane experiences in augmented reality that work across a variety of spaces, and you don't even have to leave your desk to check it out."

Using the 3D game kit, West and Jono Forbes, a senior software engineer at Unity Labs, demonstrated how Project MARS can be used to create face masks and AR scenes. To showcase the software's superpowers, the team showed a sample scene that include landscapes rendered on two separate tabletops, with a virtual land bridge between them.

After an initial hiccup (because live tech demos always fail), a hero runs from one side to the other over the bridge and smashes an object. To conclude, the character and environment where rendered in full-size.

Also coming soon from Unity is a new workflow for facial animation that could render obsolete the current contraptions, makeup, bodysuits for motion capture.

With Facial AR Remote Component, developers and creators can capture high-quality live motion capture performances via the TrueDepth camera on the iPhone X. Unity provides 52 blend shapes to match the performer's facial expressions to those of the animated character.

It's like Animojis on steroids.

The captured performances can then be trimmed and blended together in the Unity Editor, cutting down on the number of takes a performer needs to create to arrive at a final version.

"With facial mocap this accessible, anyone can take advantage of this workflow and start creating cinematic content fast enough to keep up with your ideas," said Natalie Grant, a senior product manager at Unity.

The combination of these two new tools, particularly when paired with the evolved mobile toolkits from Apple and Google, or the next-generation hardware from Microsoft and Magic Leap, raises the potential for higher quality augmented reality content and opens the door for more developers to get started on creating content for the AR technology.

"We want to help augmented reality content creators make experiences more quickly, and we want them to be able to make more awesome experiences," said West.

Just updated your iPhone? You'll find new features for Podcasts, News, Books, and TV, as well as important security improvements and fresh wallpapers. Find out what's new and changed on your iPhone with the iOS 17.5 update.

Be the First to Comment

Share Your Thoughts