With its 3D engine being responsible for approximately 60% of augmented and virtual reality experiences, Unity is continuing to place a premium on tools that not only keep developers working in its development environment but also make their workflows easier.

During the keynote presentation at Unity Copenhagen today, Unity's AR team, including Timoni West, director of XR for Unity Labs, Brittney Edmond, senior product marketing manager for platforms, and Dan Miller, AR/VR evangelist, took the stage to show off some of the new features coming to the Mixed and Augmented Reality Studio (Project MARS) and AR Foundation along with some new tools to assist developers in their quests to build AR experiences.

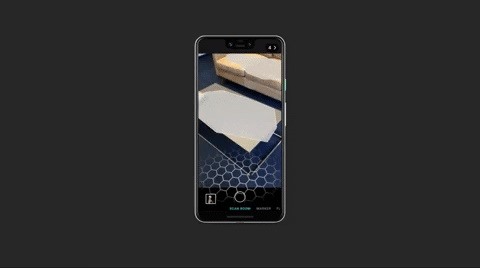

Project MARS is Unity's forthcoming environment for designing AR apps, introduced last year. The big addition announced today comes in the form of companion apps to make mobile AR development faster and enable developers to more easily account for the physical environment.

"You'll be able to sync projects in the Unity Cloud, then lay out assets as easily as placing a 3D sticker. You'll be able to use your phone to create conditions, record video and world data, and export it all back straight into the editor without having to make a build of your project," Edmond said during the keynote.

While the first app will be available for mobile devices, the next iteration will support AR headsets, such as HoloLens 2 and Magic Leap One. These additions will enable developers to capture world data from their headsets and port it directly into the Project MARS Simulation View.

In addition to previewing the mobile app, Edmond and West gave the audience a sneak peek of the Project MARS interface, which includes a simulation view, what-you-see-is-what-you-get editor, and environment templates. Project MARS will also give developers access to a wide range of AR data, including object tracking, gesture detection, and body and face-tracking.

While Unity has not yet set a date for the availability of Project MARS, the company has begun to recruit developers to visit its San Francisco offices for testing. Last week, West put out a call for participants via Twitter, with the sessions available by appointment and led by Bushra Mahmood, principal designer at Unity.

When Project MARS is available to all, it will work on top of AR Foundation, Unity's cross-platform AR toolkit. The toolkit gives developers the ability to build one app that works across various operating systems and their respective AR toolkits. Previously, this means that AR Foundation can bridge the AR superpowers of ARKit and ARCore. Starting in Unity 2019.3, AR Foundation will support HoloLens and Magic Leap as well.

Continuing on its theme of cross-platform development, Unity also introduced the XR Interaction Toolkit, which will be available in preview via Unity 2019.3. The toolkit will allow developers to add object interactions, from touchscreen interactions in mobile apps to hand and controller tracking for headsets, without having to code for a specific modality. This ability could be crucial in helping mobile developers to adapt ARCore apps for the coming onslaught of Android-based smartglasses from the likes of Nreal and Mad Gaze.

Finally, Unity unveiled the new Unity as a Library, which will give developers the ability to embed the AR functionality from Project MARS, AR Foundation, and XR Interaction Toolkit into existing native apps without having to rebuild them. The library is available in preview now, with its official release coming in Unity 2019.3.

The sum of Unity's new functions will inevitably make it much easier for developers to create apps that work on any AR platform.

For example, over the past year Resolution Games adapted Rovio's Angry Birds franchise for Magic Leap a year ago, and then created another AR Angry Birds title for iOS earlier this year.

With AR Foundation, Resolution Games could have conceivably built one Angry Birds game that would work not only for Magic Leap and iOS but also Android and HoloLens. The XR Interaction Toolkit would enable the developer to map interactions between touchscreen, hand gesture, and controllers. And Project MARS would give them an environment to simulate how the game would operate in various spaces.

Ultimately, Unity's set of AR tools remove the roadblocks of AR development and let developers concentrate on the content. Whether this increases the availability of compelling AR experiences remains to be seen. But it certainly doesn't hurt.

Just updated your iPhone? You'll find new features for Podcasts, News, Books, and TV, as well as important security improvements and fresh wallpapers. Find out what's new and changed on your iPhone with the iOS 17.5 update.

Be the First to Comment

Share Your Thoughts