Those of us who are actively developing for the HoloLens, and for the other augmented and mixed reality devices and platforms that currently exist, are constantly looking for the next bit of news or press conference about the space. Our one hope is to find any information about the road ahead, to know that the hours we spend slaving away above our keyboards, with the weight of a head-mounted display on our neck, will lead to something as amazing as we picture it.

All the analysis tends to lead down roads that say 5 to 10 years. And while there is, of course, some wisdom in tempering expectations, there is a small hope that someone makes that next massive breakthrough that will lead to wider adoption. Hope that competition will arise and force the ideas that push the technology further out into the world. That is not to say mass adoption, but the beginning stages that lead to mass adoption.

In a recent keynote at Microsoft de:code in Japan, Microsoft Technical Fellow and HoloLens head creator Alex Kipman finally drew us up a bit of a roadmap. One with a much shorter road ahead. One that a developer can definitely get a bit more excited over.

In his vision, and presumably Microsoft's vision, of the near future, Kipman explained that he sees as a shift from personal computing to collaborative computing. This idea lines up with how many futurists see the world once AR/MR becomes a prevalent technology — the world where we begin to interact with computers while we interact with others around us.

The Personal Computing Era

Personal computing has been around since the early to mid-'70s (there is some debate on the subject), and the term is very apropos. Sitting alone in a basement or bedroom playing a game or building a website/ application has been a major part of our existence, in that time period. For many, personal computers have been a part of our life — our entire life. It is hard to image our world without large desktop computers sitting in the corner of the living room, the office, or maybe even a bedroom. Often all of the above is the case. And in the last ten years, a new form of personal computing hit the world hard — smartphones and tablets.

In what could really be the ultimate form of personal computing, smartphones allow the users to walk around computing their hearts out, no longer trapped in a basement, bedroom, or office. The user can go all day accomplishing things, playing games, and even being remotely social with friends and family, while barely looking up from their device. Little-to-no interaction with the physical world around is not only a possibility, but it is also a likelihood for many personality types; I know well, I am one of those.

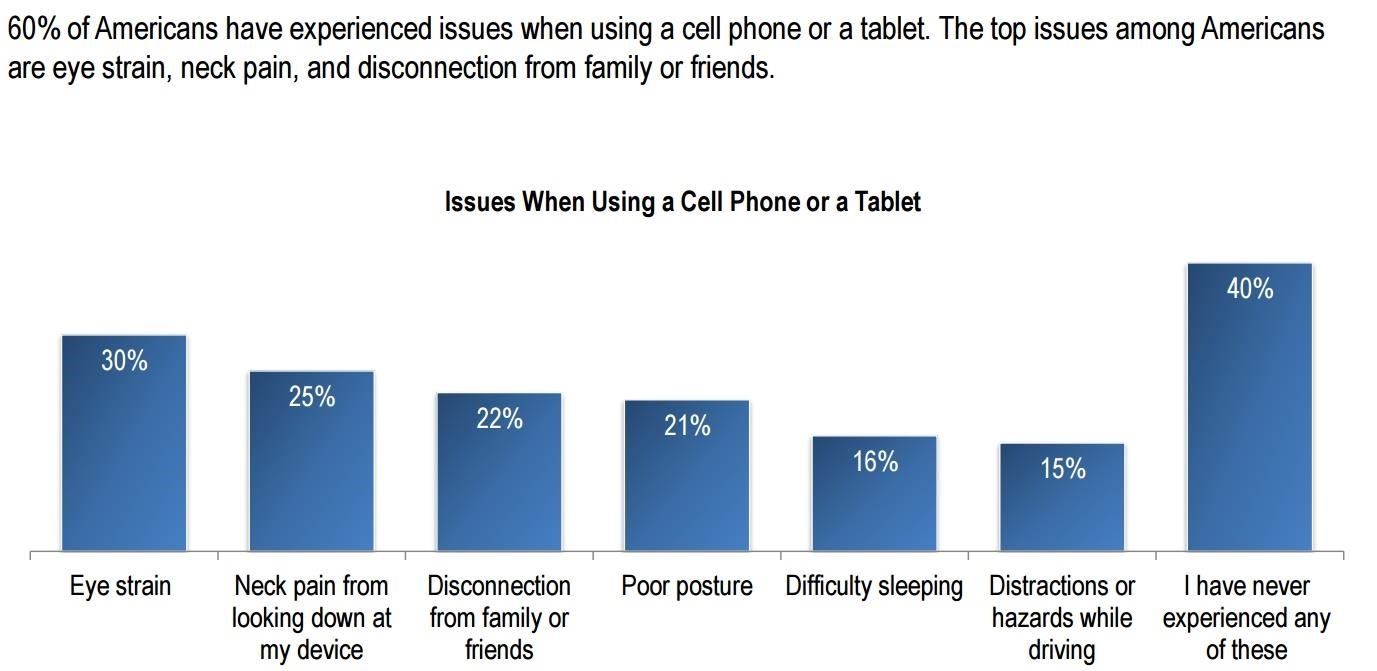

Research has begun to show at least some of the adverse effects that come from leaning over hunched looking at a smartphone constantly. Recent data provided to Next Reality by Lumus illustrates that "60% of Americans have experienced issues when using a cell phone or a tablet. The top issues among Americans are eye strain, neck pain, and disconnection from family or friends."

Of course, as we AR/MR enthusiasts, early adopters, and developers know, there is something better on the horizon — something that will eventually spell doom to the personal computing era. That something is collaborative computing, and Kipman helped present that picture in Japan this month.

In the Next 24 to 36 Months

Commentating over a number of hand-drawn slides at the event in Japan, Alex Kipman demonstrated to the audience his vision mixed reality computing, proclaiming, "This is what I believe the world will look like in about 24 to 36 months." He went on to explain the shift from personal to collaborative computing as well as the distinction between the two. Personal computing is made up of data that is connected to a person through their device, while collaborative computing is made up of data "anchored to the place."

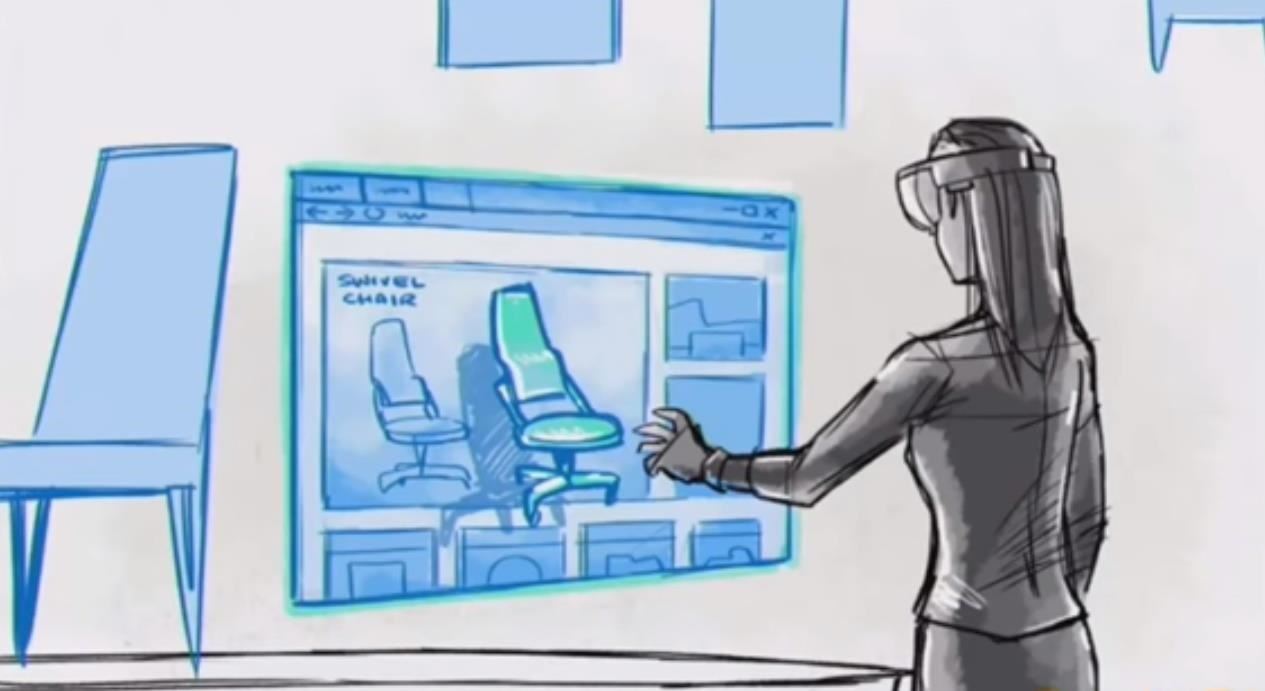

As Kipman's presentation continued, he showed slides of people standing around a table in a room. The room contains a mixture of physical objects and virtual objects, and an AI-based assistant is on the table along with a few digital objects that it could interact with.

As he built on his use-case, he showed the two people inviting other users to the gathering, virtually, each new person coming to this meeting in different virtual forms. Having everyone together allows all the users to give input on the project easily.

He then showed one of the users with a 2D Windows Edge panel open and WebVR, allowing the user to grab a chair from a website and bring it virtually into the world.

Telepresence or holoportation are not new ideas. Some teams are working on their own solutions to that very concept right now, including Microsoft, Valorem, and Mimesys. These collaborative environments, once created and fine-tuned, will likely be the launching point toward the new mixed reality future. The more tools and features that fit into these virtual environments will likely lead to the application that leads the charge.

The Here & Now

For the moment, I will just keep developing my ideas and keep cheerleading all of the developers willing to read my writing. I know my efforts are not wasted if I can convince just one person that this picture of the future is worth exploring further.

We all need to keep finding our solutions and use-cases that support how we see this future. Yes, Microsoft and other large companies in and around this space have massive marketing budgets, but marketing only goes so far when it comes to changing the entire world. These companies need the fresh ideas that come from independent developers and designers to help further seed this vision as we continue down this road.

I have seen firsthand how supportive some of these companies are when it comes to these new ideas, and they really do a good job of spreading those seeds far and wide when they appear.

Now, if we are honest with ourselves, we know that Alex Kipman's vision of the near future is optimistic. That's okay. Sometimes all we need is a bit of that type of optimism to push through potential crippling doubt.

There are a lot of things that have to happen for that future to reach us that soon. The next generation of Moore's law has to occur, fitting the technology into a form factor that doesn't look like it came from a space movie, and finding the elusive optics breakthrough that allows a larger field-of-view while maintaining an image quality, brightness, and opacity, which is holding all the hardware developers back.

As these puzzle pieces hopefully find their connecting partners over the next two or three years, maybe the work we do will see some positive results and perhaps even some ROI. Regardless, go out there and make something amazing.

Just updated your iPhone? You'll find new emoji, enhanced security, podcast transcripts, Apple Cash virtual numbers, and other useful features. There are even new additions hidden within Safari. Find out what's new and changed on your iPhone with the iOS 17.4 update.

1 Comment

This is interesting!

Share Your Thoughts