Everything Else

How To: Expand Your Available AR Effects for Your 3D Spectacles Videos

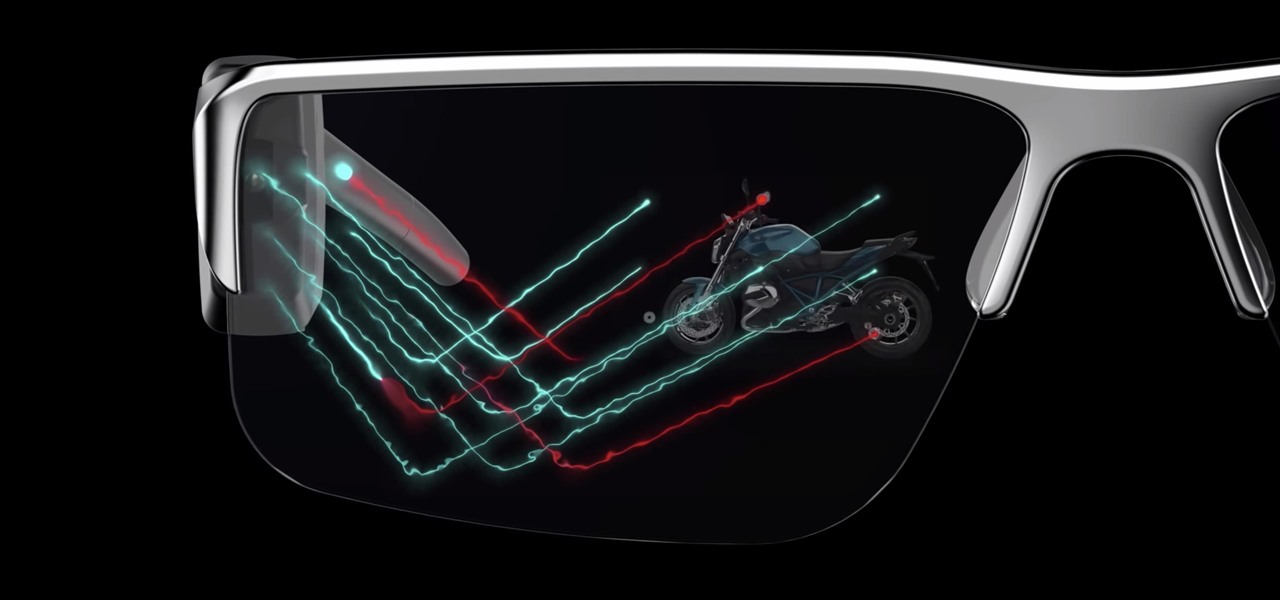

If you have Snap Spectacles 3, the dual camera-equipped sunglasses capable of capturing 3D photos and video, by now you've likely jazzed up the videos you've captured on the wearable with Lenses via Snapchat.

How To: Add Snapchat AR Effects to Your Spectacles 3 Videos

Snapchat parent company Snap took a huge step towards the realm of smartglasses with the third iteration of its camera-equipped Spectacles sunglasses.

AR Dev 101: Create Cross-Platform AR Experiences with Unity & Azure Cloud, Part 1 (Downloading the Tools)

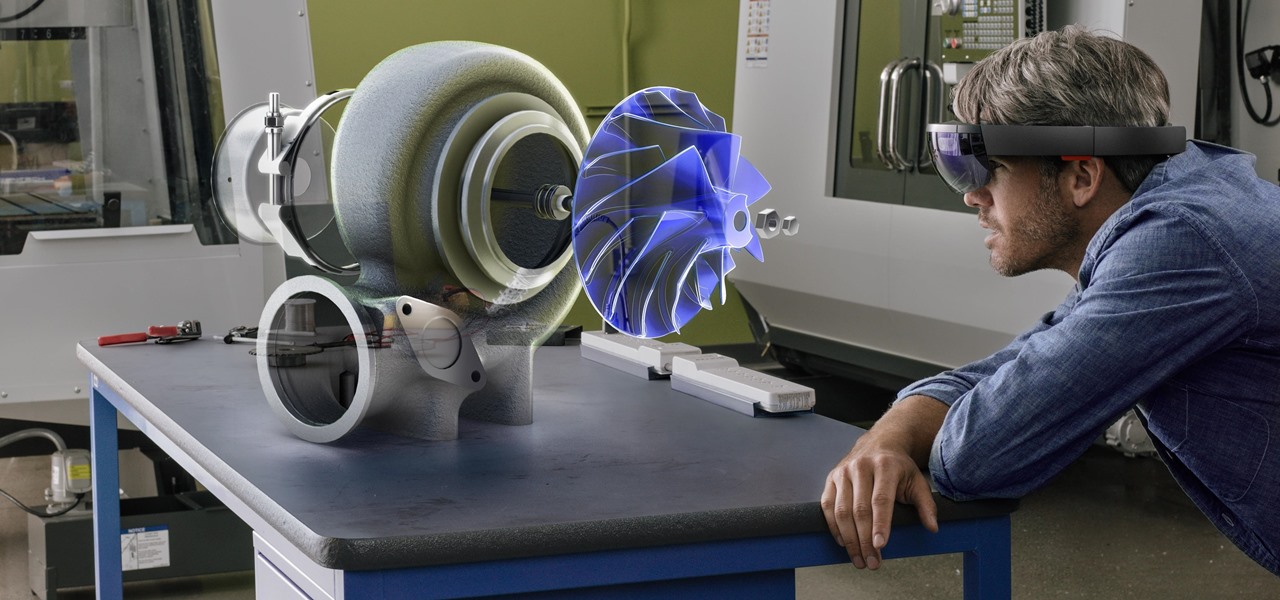

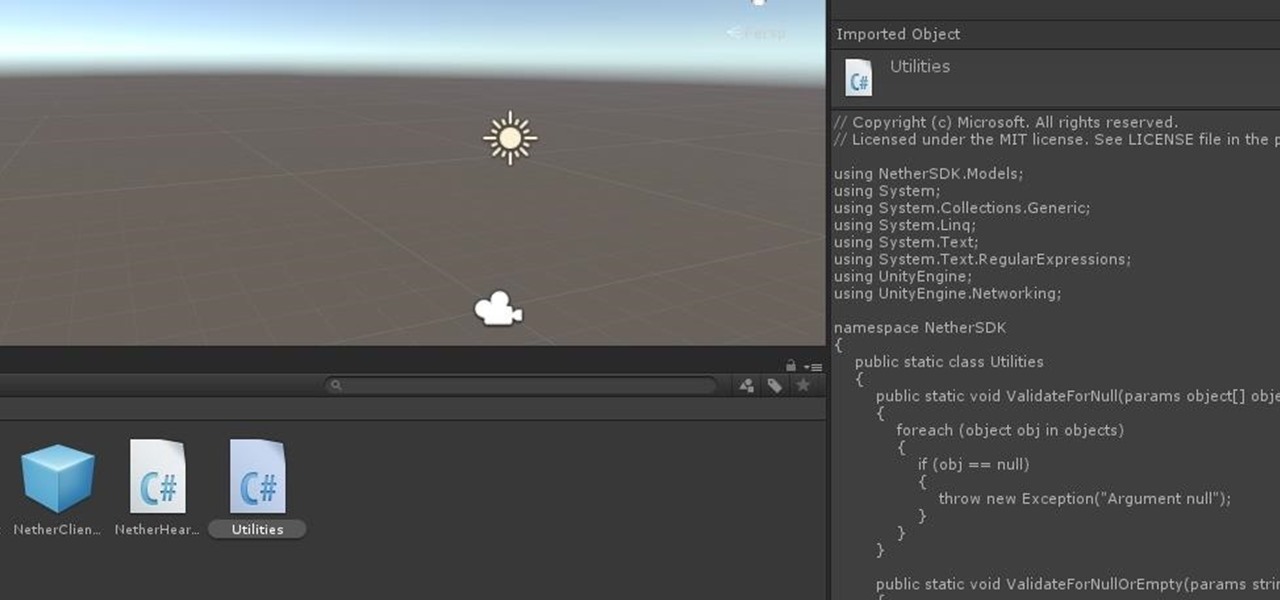

In this series, we are going to get you to the edge of building your own cloud-based, cross-platform augmented reality app for iPhone, Android, HoloLens, and Meta 2, among other devices. Once we get the necessary software installed, we will walk through the process of setting up an Azure account and creating blob storage.

AR Dev 101: Create Cross-Platform AR Experiences with Unity & Azure Cloud, Part 2 (Installing Project Nether & MRTK)

As we aim for a wireless world, technology's reliance on cloud computing services is becoming more apparent every day. As 5G begins rolling out later this year and network communications become even faster and more reliable, so grows our dependency on the services offered in the cloud.

How To: The Quick & Dirty Guide to the Augmented Reality Terms You Need to Know

Every industry has its own jargon, acronyms, initializations, and terminology that serve as shorthand to make communication more efficient among veteran members of that particular space. But while handy for insiders, those same terms can often create a learning curve for novices entering a particular field. The same holds true for the augmented reality (also known as "AR") business.

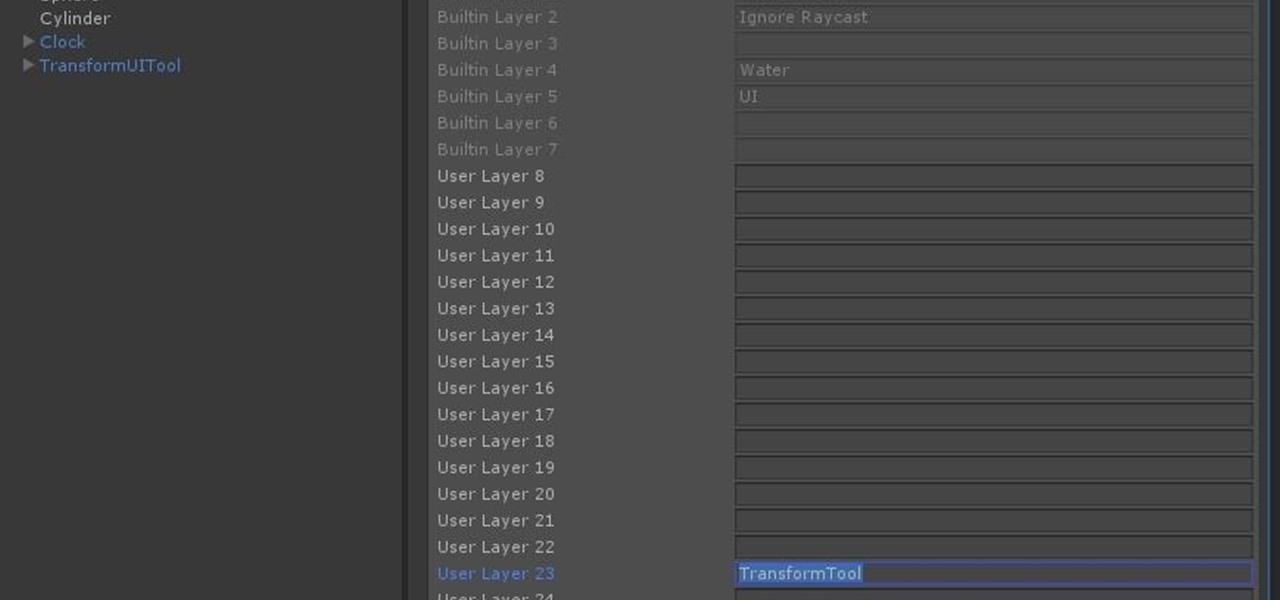

HoloLens Dev 101: Building a Dynamic User Interface, Part 8 (Raycasting & the Gaze Manager)

Now that we have unlocked the menu movement — which is working very smoothly — we now have to get to work on the gaze manager, but first, we have to make a course correction.

HoloLens Dev 101: Building a Dynamic User Interface, Part 7 (Unlocking the Menu Movement)

In the previous section of this series on dynamic user interfaces for HoloLens, we learned about delegates and events. At the same time we used those delegates and events to not only attach our menu system to the users gaze, but also to enable and disable the menu based on certain conditions. Now let's take that knowledge and build on it to make our menu system a bit more comfortable.

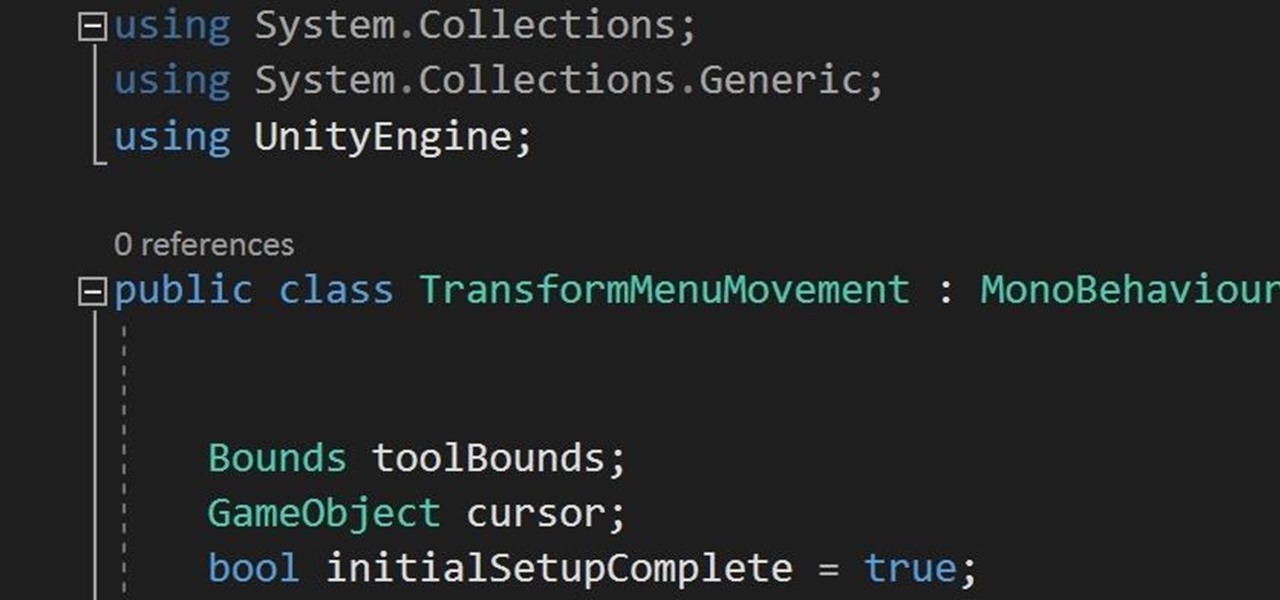

HoloLens Dev 101: Building a Dynamic User Interface, Part 6 (Delegates & Events)

In this chapter, we want to start seeing some real progress in our dynamic user interface. To do that, we will have our newly crafted toolset from the previous chapter appear where we are looking when we are looking at an object. To accomplish this we will be using a very useful part of the C# language: delegates and events.

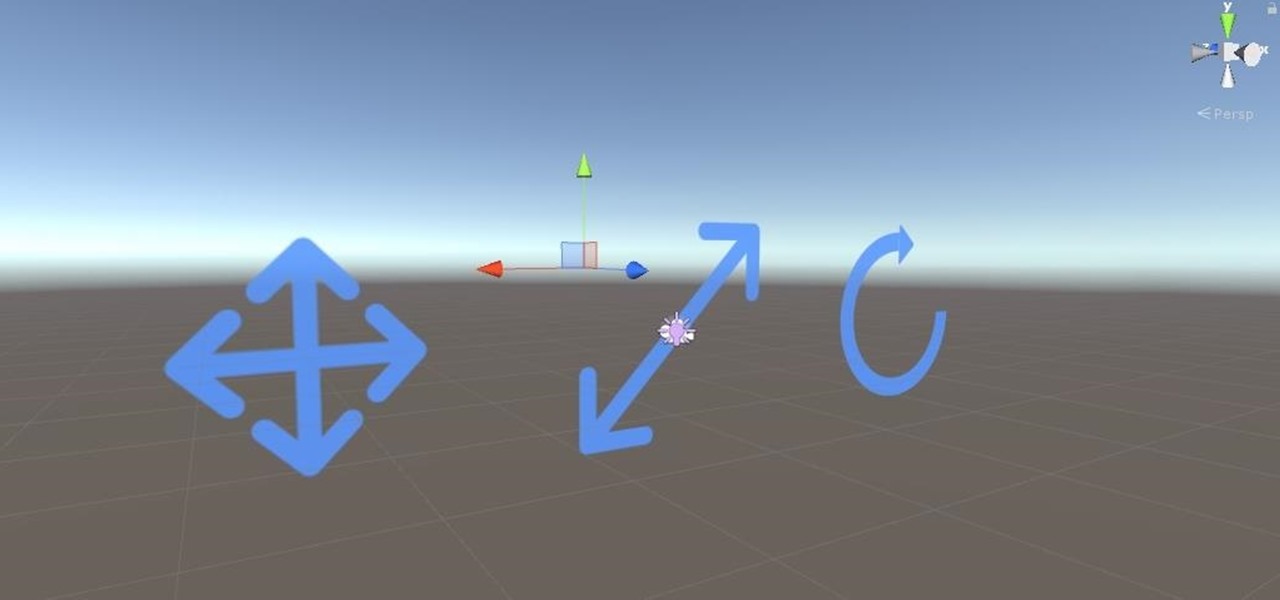

HoloLens Dev 101: Building a Dynamic User Interface, Part 5 (Building the UI Elements)

Alright, calm down and take a breath! I know the object creation chapter was a lot of code. I will give you all a slight reprieve; this section should be a nice and simple, at least in comparison.

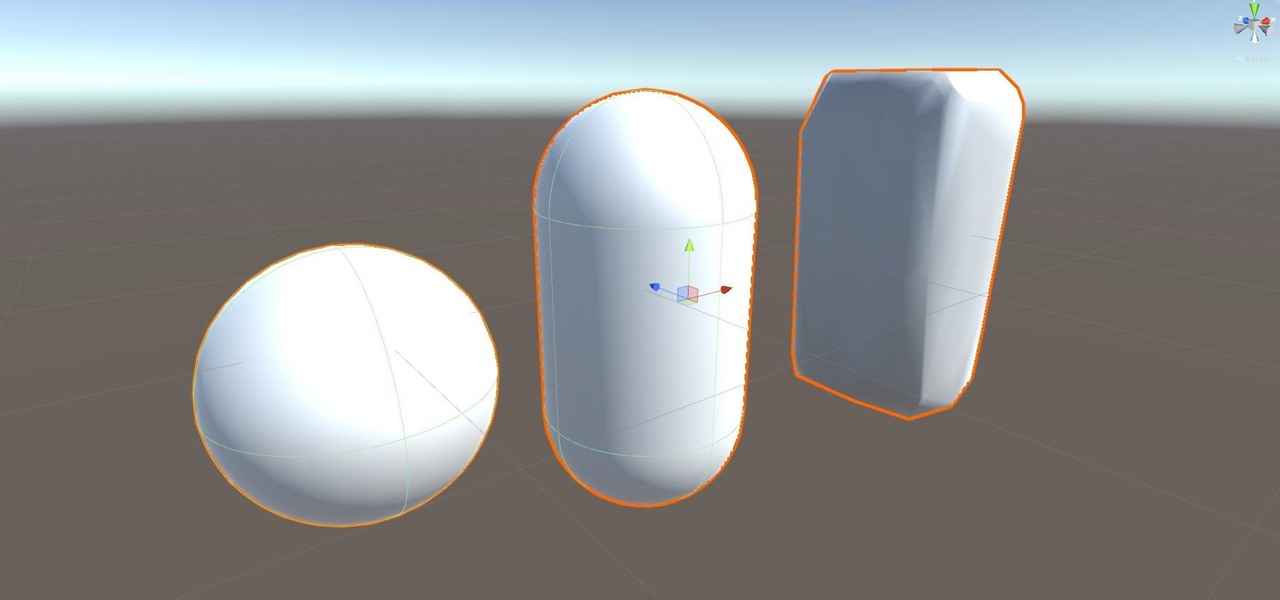

HoloLens Dev 101: Building a Dynamic User Interface, Part 4 (Creating Objects from Code)

After previously learning how to make the material of an object change with the focus of an object, we will build on that knowledge by adding new objects through code. We will accomplish this by creating our bounding box, which in the end is not actually a box, as you will see.

HoloLens Dev 101: Building a Dynamic User Interface, Part 3 (Focus & Materials)

We started with our system manager in the previous lesson in our series on building dynamic user interfaces, but to get there, aside from the actual transform, rotation, and scaling objects, we need to make objects out of code in multiple ways, establish delegates and events, and use the surface of an object to inform our toolset placement.

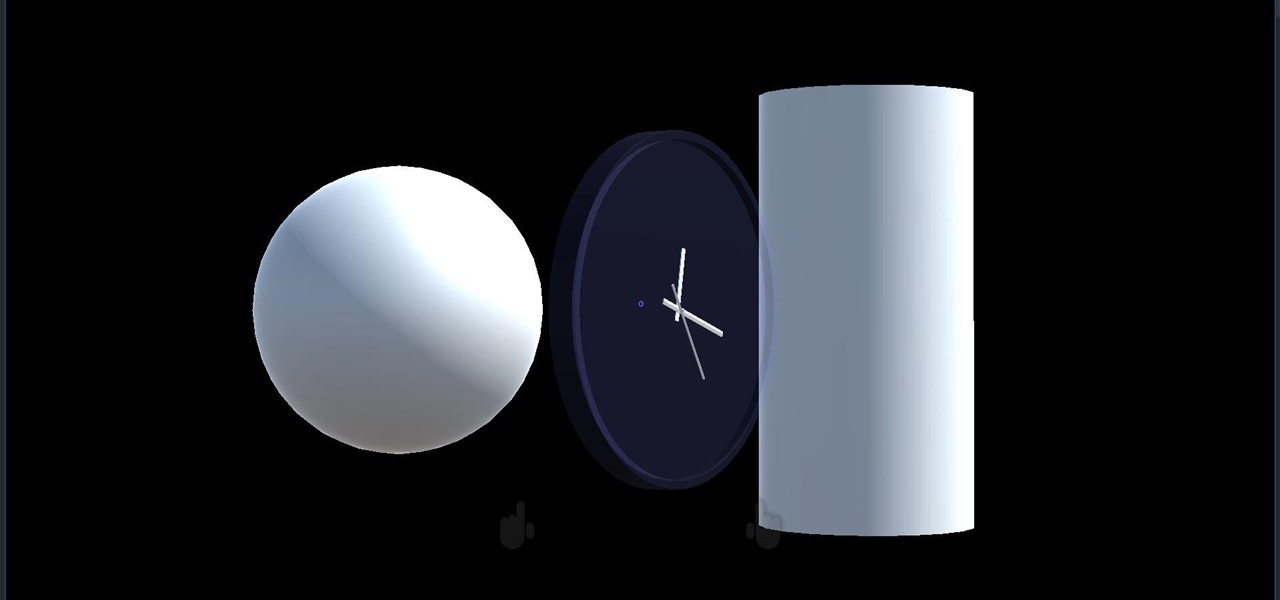

HoloLens Dev 101: Building a Dynamic User Interface, Part 1 (Setup)

Alright, let's dig into this and get the simple stuff out of the way. We have a journey ahead of us. A rather long journey at that. We will learn topics ranging from creating object filtering systems to help us tell when a new object has come into a scene to building and texturing objects from code.

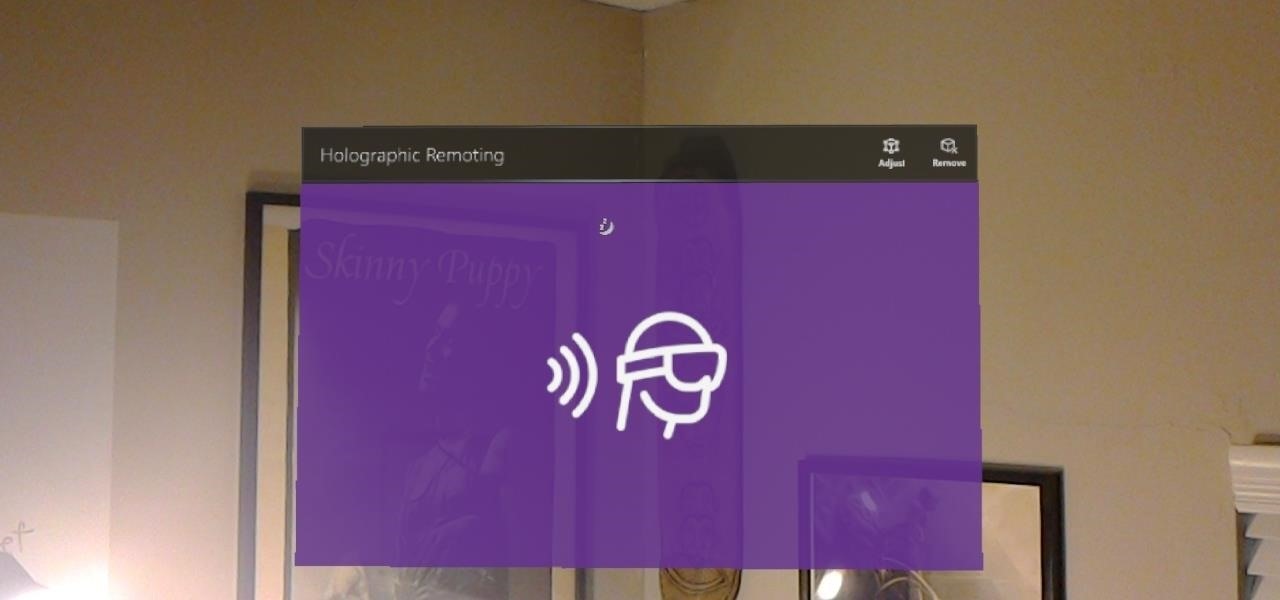

HoloLens Dev 101: How to Use Holographic Remoting to Improve Development Productivity

Way back, life on the range was tough and unforgiving for a HoloLens developer. Air-tap training was cutting edge and actions to move holograms not called "TapToPlace" were exotic and greeted with skepticism. The year was 2016, and developers had to deploy to their devices to test things as simple as gauging a cube's size in real space. Minutes to hours a week were lost to staring at Visual Studio's blue progress bar.

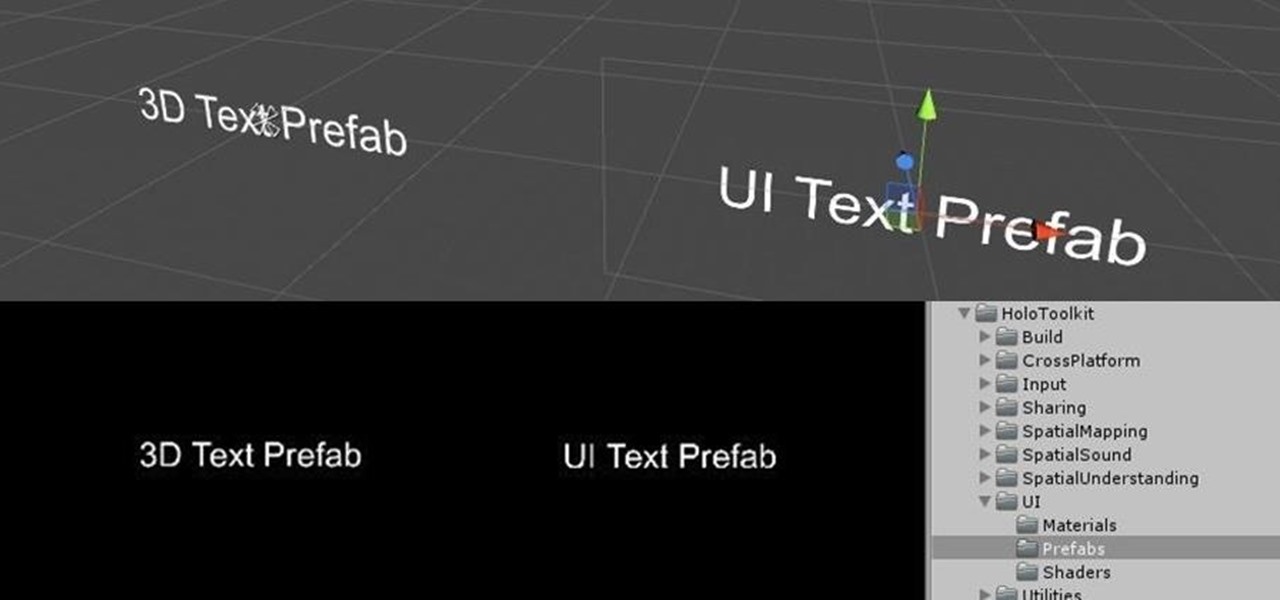

HoloToolkit: Make Words You Can See in Unity with Typography Prefabs

You may remember my post from a couple weeks ago here on NextReality about the magical scaling ratios for typography from Dong Yoon Park, a Principal UX Designer at Microsoft, as well as developer of the Typography Insight app for Hololens. Well, his ideas have been incorporated into the latest version of HoloToolkit, and I'm going to show you how they work.

How To: Create augmented reality apps

In this video, viewers learn how to create augmented reality applications, using Papervision 3D version 2.0. Augmented reality is a term for a live direct or indirect view of a physical real-world environment whose elements are merged with virtual computer-generated imagery - creating a mixed reality. To create augmented reality applications, users require the following programs and software: Adobe Flex Builder 3, TortoiseSVN and FLARToolkit. This video tutorial is not recommended for beginne...