Researchers at Disney have demonstrated the ability to render virtual characters in augmented reality that are able interact autonomously with its surrounding physical environment.

So imagine that you have a virtual porg placed on your living room floor. Based on the research presented by the team at Disney, it would be possible to have that porg jump up a flight of stairs, or fall over if it were run over by a rambunctious puppy.

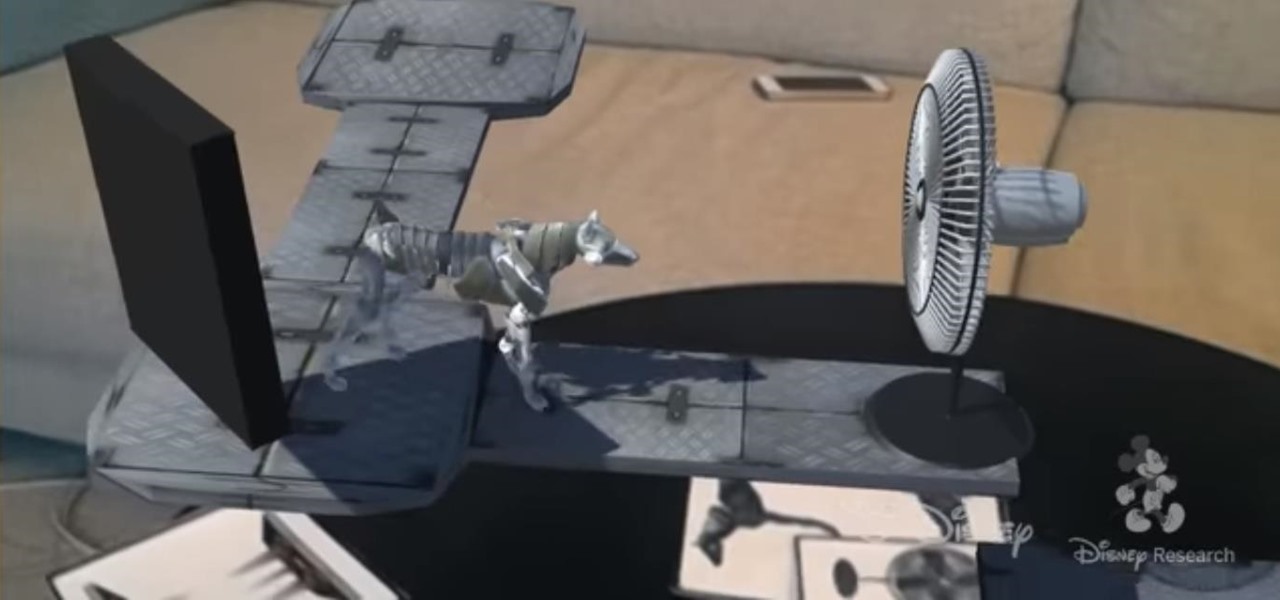

Part of the dynamic lies in modeling the character's motion, particularly in terms its skeletal structure and joint movement, and examining how that motion adapts to changes in terrain. To that end, the researchers describe the ability to apply animation automatically to a 3D model of a character. This process gives the character locomotion that can adapt to real-world conditions.

To enable a character to intelligently react to its environment, the researchers developed a means for scanning 3D environments by using pre-defined objects and Vuforia's image recognition technology. The virtual character is trained to perform based on the objects or props it recognizes. For instance, a fan prop would be used to stop the character's motion.

The full research paper is available for download at the Disney Research website.

Disney's breakthrough is complementary to recent research published by the Optical Society of America. In that case, a pair of researchers at the University of Arizona College of Optical Sciences, presented a method for placing virtual objects in front of and behind various physical objects, achieving mutual occlusion.

The research has potentially significant implications on improving the realism of AR experiences. With today's AR experiences in Snapchat or those built on ARKit, content can be anchored to a horizontal surface, but the experiences are not able to fully compensate for the full context of the user's surroundings.

Given this new development, I look forward to the day when I can knock over a Snapchat dancing hot dog with my hand.

Just updated your iPhone? You'll find new features for Podcasts, News, Books, and TV, as well as important security improvements and fresh wallpapers. Find out what's new and changed on your iPhone with the iOS 17.5 update.

Be the First to Comment

Share Your Thoughts