For awhile now, Google has offered the ability to translate text through smartphone cameras via Google Translate and Google Lens, with Apple bringing similar technology to iPhones via Live Text.

Hold our beers, say AI researchers at Facebook, who have now developed computer vision technology that can handle real-time text translation and then some in augmented reality.

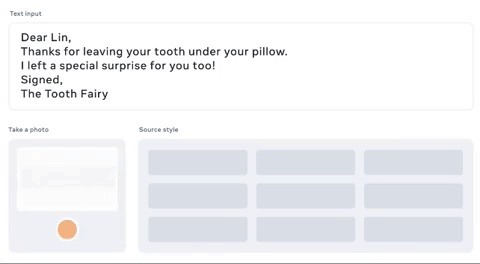

This week, Praveen Krishnan, a postdoctoral researcher for Facebook, and Tal Hassner, research scientist, published research detailing an unsupervised AI model called TextStyleBrush that can view a single typed or handwritten word via a smartphone camera, emulate its typeface, and then virtually replace the original text with another word in the camera view.

"It works similar to the way style brush tools work in word processors, but for text aesthetics in images. It surpasses state-of-the-art accuracy in both automated tests and user studies for any type of text," wrote the researchers in a blog post. "Unlike previous approaches, which define specific parameters such as typeface or target style supervision, we take a more holistic training approach and disentangle the content of a text image from all aspects of its appearance of the entire word box. The representation of the overall appearance can then be applied as one-shot-transfer without retraining on the novel source style samples."

While the current applications for mobile augmented reality, particularly when compared to capabilities implemented by Google and Apple, Facebook also has its sights set on integrating TextStyleBruch in its forthcoming smartglasses.

Our researchers built an AI model that can learn to edit the text in any image by training on just a single word. This has huge potential in augmented reality — imagine your AR glasses doing real-time translation of the world, from street signs to handwritten notes pic.twitter.com/O9fHXhrMQI

In addition, TextStyleBrush can use its typeface recognition capability to replicate typeface or handwriting samples and apply them to whole blocks of text. In an example, the system replaces a hypothetical typed note from the "tooth fairy" to a child, captures a handwriting sample, and renders the note appearing in the handwriting style on looseleaf paper. It could be a forger's best friend!

Recognizing how this power could fall into the wrong hands, Facebook has opted to make the model's code available as open source.

Overall, what this development indicates is that a AR smartglasses world won't only be dictated by filters transforming the faces and landscapes of what we see through our smartglasses, but we'll also have the (good and bad) option of manipulating the very information we view through these upcoming wearing leneses.

Just updated your iPhone? You'll find new features for Podcasts, News, Books, and TV, as well as important security improvements and fresh wallpapers. Find out what's new and changed on your iPhone with the iOS 17.5 update.

Be the First to Comment

Share Your Thoughts